What is GraphQL Query Depth Limit Bypass? Ways to Exploit, Examples and Impact

Learn how to identify and exploit GraphQL Query Depth Limit Bypasses. Discover technical payloads, mitigation strategies, and how to protect your APIs.

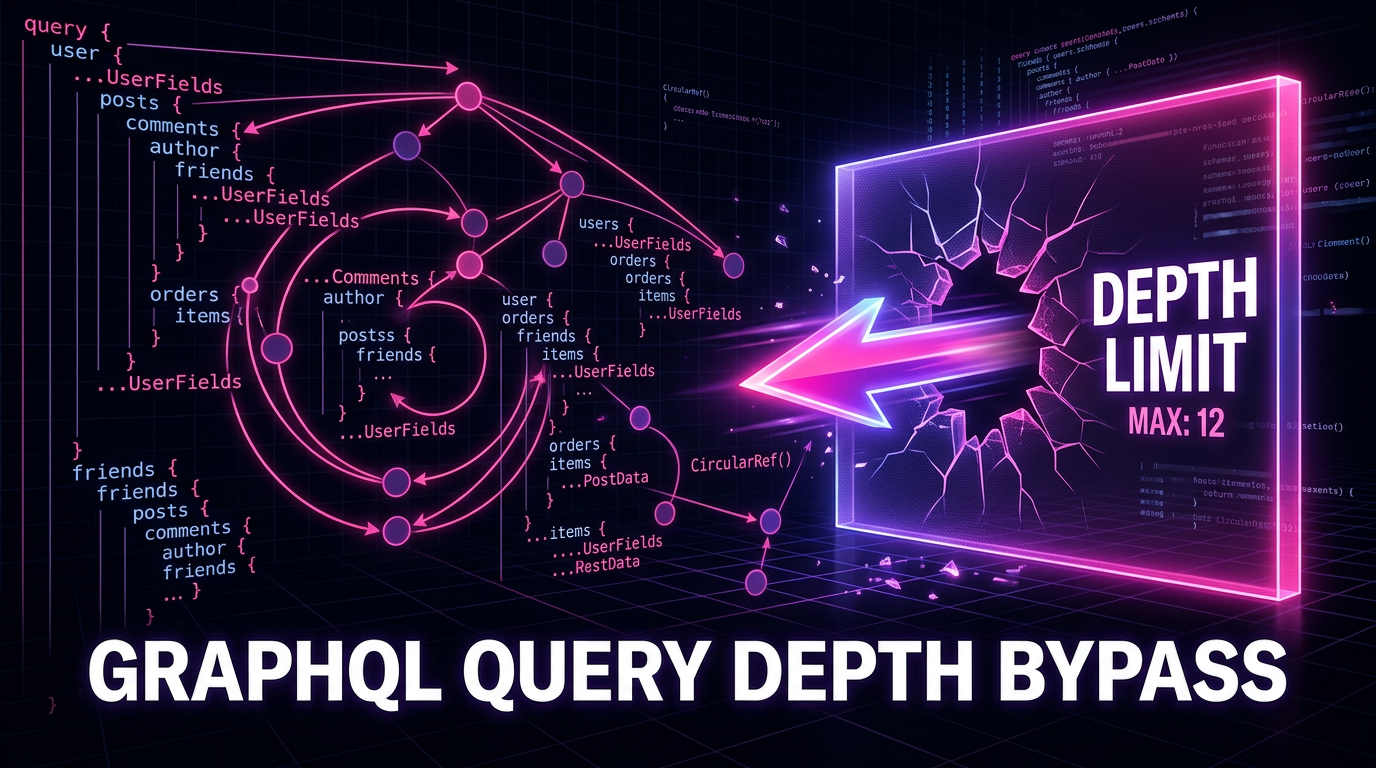

GraphQL has revolutionized the way developers build APIs by providing a flexible, strongly-typed schema that allows clients to request exactly the data they need. However, this flexibility comes with a significant security trade-off: if not properly constrained, a client can craft queries that are so complex or deep that they overwhelm the server's resources. One of the primary defenses against this is a "Query Depth Limit." In this post, we will explore what happens when these limits are poorly implemented, how attackers bypass them, and what you can do to secure your infrastructure.

What is GraphQL?

Before diving into depth limits, it is essential to understand the underlying structure of GraphQL. Unlike traditional REST APIs, which have fixed endpoints returning fixed data structures, GraphQL operates on a graph-based model. In this model, data points are nodes, and the relationships between them are edges. When you query a GraphQL API, you are essentially traversing this graph.

Because the client defines the shape of the response, they can nest objects within objects. For example, a user can request their profile, their posts, the comments on those posts, and the authors of those comments—all in a single HTTP request. While efficient, this "traversal" can be abused to create recursive or extremely deep structures that force the server to perform massive amounts of database lookups and compute cycles.

Understanding GraphQL Query Depth

Query depth refers to the number of levels of nesting in a single GraphQL query. Each time you open a new set of curly braces to request fields on a related object, the depth increases by one.

How Depth is Calculated

Consider the following query:

query getAuthorData {

author(id: "1") { # Depth 1

name

posts { # Depth 2

title

comments { # Depth 3

content

author { # Depth 4

id

name

}

}

}

}

}

In this example, the query reaches a depth of 4. Most security-conscious organizations implement a maximum depth limit (e.g., a limit of 5 or 10) to prevent attackers from requesting 100 levels of nested data, which would likely crash the application or the database. To maintain visibility into these types of configurations across your infrastructure, using a tool like Jsmon can help identify exposed GraphQL endpoints.

What is a Query Depth Limit Bypass?

A Query Depth Limit Bypass occurs when an attacker finds a way to submit a query that exceeds the intended complexity or depth allowed by the server, despite the presence of a security middleware. This usually happens because the depth-checking logic is flawed or only looks at a specific type of query structure while ignoring others. If an attacker can bypass these limits, they can perform a Denial of Service (DoS) attack, exhausting CPU and memory resources on the API gateway or the backend database.

Common Ways to Exploit Depth Limits

Attackers use several techniques to circumvent depth-limiting middleware. Understanding these methods is the first step toward building more resilient APIs.

1. Alias Overloading

Many depth-limit libraries count the vertical depth of a query but fail to account for horizontal expansion. Aliases allow a client to rename the result of a field. An attacker can use aliases to request the same deep object multiple times in a single query level.

Example Payload:

query AliasBypass {

first: author(id: "1") {

posts { comments { author { name } } } # Depth 4

}

second: author(id: "1") {

posts { comments { author { name } } } # Depth 4

}

# ... repeat 100 times

}

If the server only checks that no single path exceeds depth 5, this query passes. However, the server still has to resolve the depth-4 path 100 times, leading to significant resource consumption. This effectively bypasses the spirit of the depth limit by increasing the "breadth" or "total complexity" of the query.

2. Fragment Spreading and Recursion

Fragments are reusable units in GraphQL. If the depth-limit logic is applied to the query before fragments are expanded (flattened), an attacker can hide depth inside fragments.

Example Payload:

query FragmentBypass {

author(id: "1") {

...AuthorFragment

}

}

fragment AuthorFragment on Author {

posts {

...PostFragment

}

}

fragment PostFragment on Post {

comments {

...CommentFragment

}

}

fragment CommentFragment on Comment {

author { name }

}

If the validator only looks at the query block and doesn't recursively follow the ... fragment spreads, it might calculate the depth as 1 or 2, when the actual execution depth is much higher.

3. Circular Reference Exploitation

In many schemas, relationships are bidirectional. A User has Posts, and a Post has an Author (which is a User). This creates a circular dependency that can be exploited to create infinite loops if not capped.

Example Payload:

query CircularBypass {

user(id: "1") {

posts {

author {

posts {

author {

posts {

author { name }

}

}

}

}

}

}

}

While this looks like a standard deep query, in a poorly configured environment, the depth limit might be set too high, or the server might fail to detect that the same objects are being fetched repeatedly. When combined with other techniques like aliases, circular references become lethal to server performance.

4. Array-Based Query Batching

Some GraphQL implementations allow sending an array of queries in a single HTTP request. If the depth limit is applied per query but there is no limit on the number of queries in the array, an attacker can bypass the protection.

Example HTTP Request:

[

{ "query": "{ author(id:1) { posts { title } } }" },

{ "query": "{ author(id:2) { posts { title } } }" },

... repeated 500 times

]

The server might validate each individual query as having a depth of 2 (which is safe), but executing 500 of them simultaneously can lead to the same resource exhaustion as a single query with a depth of 1000.

Real-World Impact of Depth Limit Bypasses

The consequences of a successful bypass are rarely related to data theft (unauthorized access) but are almost always related to availability and infrastructure costs.

- Denial of Service (DoS): The most common impact. By forcing the server to process a massive, nested query, the CPU spikes to 100%, and the memory is exhausted. This makes the API unavailable for legitimate users.

- Database Exhaustion: Deep queries often translate to complex SQL JOINs or multiple recursive NoSQL lookups. This can lock database tables, exhaust connection pools, and slow down the entire backend ecosystem.

- Increased Infrastructure Costs: If your API is hosted on serverless platforms (like AWS Lambda) or auto-scaling clusters, a depth bypass attack can trigger massive scaling events, leading to a surprise bill at the end of the month.

- Upstream Service Failure: Many GraphQL servers act as gateways to internal microservices. A deep query might trigger hundreds of requests to internal services, causing a cascading failure across the entire organization.

How to Prevent and Mitigate Depth Limit Bypasses

Securing a GraphQL API requires a multi-layered approach. You cannot rely on depth limiting alone.

Implement Query Complexity Analysis

Instead of just counting depth, use a complexity scoring system. Assign a "cost" to each field. For example, a scalar field like name costs 1, but a connection field like posts costs 5. You then set a maximum total complexity for the entire query. This effectively stops both deep queries and wide queries (alias overloading).

Libraries like graphql-query-complexity for Node.js are excellent for this purpose.

Use Static Analysis

Validate the query against the schema and depth rules before any execution begins. Ensure your validation engine correctly flattens fragments and checks the total depth of the expanded tree.

Enforce Timeouts and Memory Limits

At the infrastructure level, ensure that no single request can run for more than a few seconds. If a GraphQL resolver takes too long to fetch nested data, the execution should be killed. Similarly, set memory limits on your API containers to prevent a single malicious request from taking down the entire node.

Disable Introspection in Production

While not a direct fix for depth limits, disabling introspection makes it harder for an attacker to map out your schema and find the circular relationships or deep paths needed to craft an exploit. If they don't know that Post links back to Author, they can't easily build a circular query.

Rate Limiting

Implement rate limiting based on IP addresses or API keys. This prevents attackers from using query batching to flood your server with hundreds of "shallow" but resource-intensive queries.

Conclusion

GraphQL Query Depth Limit Bypasses are a significant threat to API availability. Because GraphQL shifts the power of data definition to the client, the server must be extremely vigilant about what it agrees to process. By moving beyond simple depth checks and implementing comprehensive query complexity analysis, developers can protect their applications from resource exhaustion and DoS attacks.

To proactively monitor your organization's external attack surface and catch exposures before attackers do, try Jsmon.