What is Cache Eviction Attack? Ways to Exploit, Examples and Impact

Discover how cache eviction attacks like Prime+Probe work. Learn to identify, exploit, and prevent these side-channel vulnerabilities in modern hardware.

In the pursuit of high-speed computing, modern hardware relies heavily on caching mechanisms to bridge the performance gap between fast processors and relatively slow main memory. However, this efficiency comes with a hidden security cost. Cache eviction attacks represent a sophisticated class of side-channel exploits where an attacker manipulates the CPU cache to leak sensitive information, such as cryptographic keys or private user data, without ever needing direct access to the victim's memory space.

What is a Cache Eviction Attack?

A cache eviction attack is a hardware-level side-channel attack that exploits the deterministic way central processing units (CPUs) manage their internal cache. Because the cache has limited storage, when new data is brought in, older data must be removed or "evicted." By intentionally filling the cache with their own data, an attacker can force a victim's sensitive data out of the cache. By subsequently measuring the time it takes for the victim to perform certain operations, or the time it takes for the attacker to reload their own data, the attacker can infer what memory addresses the victim accessed.

To understand this, we must first look at the underlying architecture. Jsmon frequently encounters infrastructure where underlying hardware vulnerabilities can be exacerbated by software configurations, making an understanding of these low-level attacks vital for modern security professionals.

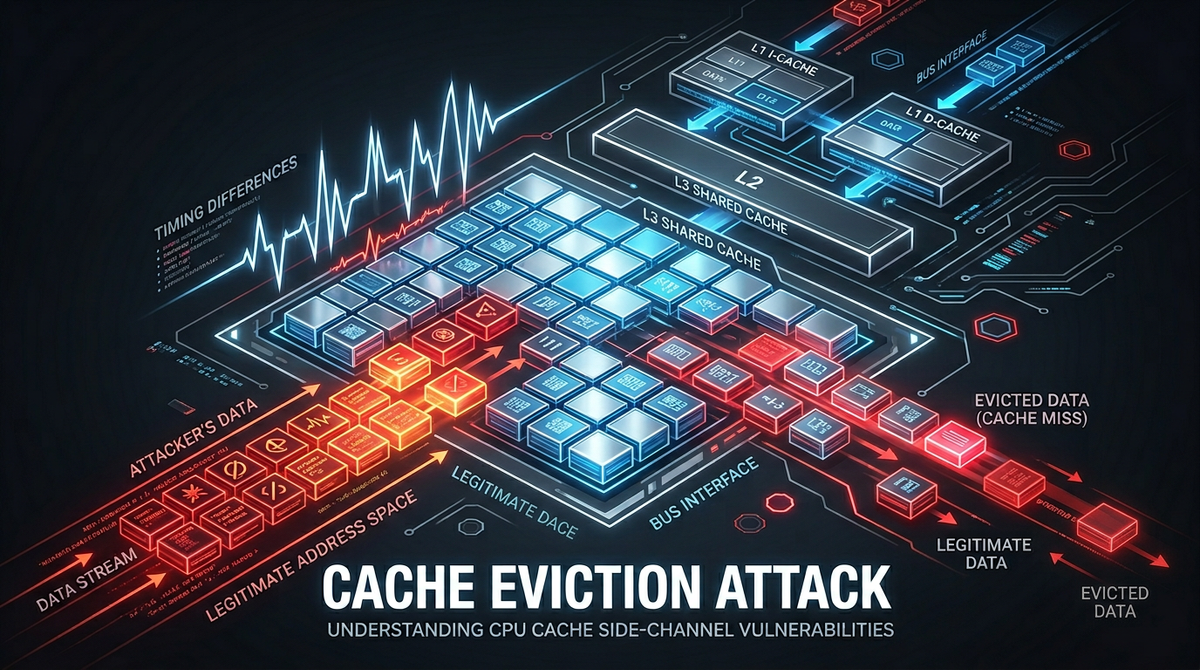

Understanding the CPU Cache Hierarchy

To grasp how eviction works, one must understand how CPUs store data. Modern CPUs use a multi-tiered cache system:

- L1 Cache: Extremely fast, located on the CPU core, usually split into Instruction (L1i) and Data (L1d) caches.

- L2 Cache: Larger and slightly slower than L1, often dedicated to a single core.

- L3 Cache: The largest and slowest of the on-chip caches, typically shared across all cores (Last Level Cache or LLC).

Cache Sets and Associativity

Caches are not just large buckets of random data; they are structured into sets and ways. This is known as an N-way set-associative cache.

- A memory address maps to a specific set in the cache.

- Each set has a fixed number of slots called ways.

- If a set has 8 ways, it can hold 8 different memory blocks simultaneously.

When a CPU needs to store a 9th block of data in an 8-way set, it must choose one of the existing 8 blocks to discard. This is the eviction policy, often following a Least Recently Used (LRU) or pseudo-LRU algorithm. Attackers exploit this predictable behavior to clear out a victim's data.

How Cache Eviction Attacks Work: The Mechanics

The fundamental principle of any cache attack is the timing difference. Accessing data from the cache (a "cache hit") is significantly faster than fetching it from the main RAM (a "cache miss"). An attacker uses a high-resolution timer (like the rdtsc instruction in x86) to measure these nanosecond-scale differences.

The Attack Cycle

Most cache eviction attacks follow a three-step cycle:

- Preparation: The attacker prepares the cache state (e.g., by filling it with their own data).

- Victim Execution: The attacker waits for the victim process (like an AES encryption routine) to run.

- Measurement: The attacker checks the cache state to see which of their data was evicted, revealing which cache sets the victim used.

Common Cache Eviction Techniques

There are several distinct methods used to perform these attacks, ranging from those requiring shared memory to those that work across completely isolated processes.

1. Prime + Probe

This is one of the most powerful techniques because it does not require the attacker and victim to share memory. It targets the cache sets themselves.

- Prime: The attacker fills specific cache sets with their own data by reading a large array (the "eviction set").

- Wait: The attacker yields the CPU, allowing the victim process to execute.

- Probe: The attacker reads their eviction set again. If a read is slow, it means the victim accessed a memory address that mapped to that specific set, evicting the attacker's data.

// Simplified Prime + Probe logic in C

void probe(uint64_t *eviction_set, int sets) {

for (int i = 0; i < sets; i++) {

uint64_t start = rdtsc();

volatile uint64_t val = eviction_set[i * STRIDE];

uint64_t end = rdtsc();

if ((end - start) > THRESHOLD) {

// Cache Miss: The victim used this set!

record_activity(i);

}

}

}

2. Flush + Reload

This technique is more precise but requires shared memory (e.g., shared libraries like libc.so). It relies on the clflush instruction, which allows a process to evict a specific memory address from the entire cache hierarchy.

- Flush: The attacker flushes a specific shared memory address from the cache.

- Wait: The victim executes. If the victim accesses that shared address, it is brought back into the cache.

- Reload: The attacker measures the time to read that same address. A fast read indicates the victim accessed the data.

3. Evict + Time

This is a "black-box" attack usually targeting specific algorithms.

- The attacker measures how long a victim's operation (like encryption) takes normally.

- The attacker evicts a specific cache set.

- The attacker measures the victim's operation again. If it takes longer, the evicted set was essential to the victim's operation.

Real-World Examples and Impact

Cache eviction attacks are not just theoretical; they have been used to break industry-standard security implementations.

Cryptographic Key Recovery

One of the most famous applications is recovering AES (Advanced Encryption Standard) keys. Many AES implementations use "T-tables"—precomputed lookup tables stored in memory. Since the index of the table accessed depends on the secret key, an attacker using Prime + Probe can observe which parts of the T-table were loaded into the cache. By correlating these cache hits over several thousand encryptions, the attacker can mathematically reconstruct the full 128-bit or 256-bit key.

Breaking ASLR

Address Space Layout Randomization (ASLR) is a security feature that makes it harder for attackers to find the location of specific code in memory. However, cache eviction can be used to "de-randomize" memory. By observing which cache sets are used during branch execution, an attacker can map out the memory layout and bypass ASLR protections.

Spectre and Meltdown

The infamous Spectre and Meltdown vulnerabilities brought cache side-channels to the mainstream. These attacks use speculative execution to read memory that a process shouldn't see, and then use a cache side-channel (like Flush + Reload) to leak that data out to the attacker. Even though the CPU eventually realizes it shouldn't have executed those instructions and rolls back the architectural state, the "microarchitectural" state (the cache) remains changed.

Impact on Cloud and Multi-tenant Environments

The impact of cache eviction is most severe in cloud environments where multiple Virtual Machines (VMs) share the same physical hardware. While hypervisors provide strong memory isolation, they often share the L3 cache across all cores. An attacker in one VM can "Prime" the L3 cache and observe the activity of a victim in a completely different VM, leading to cross-VM data leakage.

How to Prevent Cache Eviction Attacks

Defending against these attacks is challenging because they exploit the fundamental design of modern hardware. However, several mitigation strategies exist:

1. Constant-Time Programming

The most effective software defense is ensuring that sensitive operations (like cryptography) do not have memory access patterns that depend on secret data. If every encryption takes the same amount of time and accesses the same memory addresses regardless of the key, the cache side-channel provides no information.

2. Disabling High-Resolution Timers

Since these attacks rely on measuring nanosecond differences, reducing the precision of timers (like performance.now() in browsers) can make the attacks much harder to execute. However, attackers can often build their own timers using counter threads.

3. Cache Partitioning

Newer CPU technologies, such as Intel's Resource Director Technology (RDT), allow the OS or hypervisor to partition the L3 cache. This ensures that a specific process or VM is restricted to a subset of cache ways, preventing it from evicting data belonging to other partitions.

4. Hardware Instructions

Using hardware-accelerated instructions for cryptography, such as AES-NI, eliminates the need for software lookup tables. Since these instructions perform the math entirely within the CPU registers and logic units without accessing the cache for table lookups, they are inherently resistant to cache-based side-channel attacks.

Conclusion

Cache eviction attacks serve as a stark reminder that software security is only as strong as the hardware it runs on. By exploiting the very mechanisms designed to make our computers faster, attackers can bypass traditional memory isolation and leak the most sensitive secrets of a system. For developers and security engineers, the key takeaway is to prioritize constant-time algorithms and leverage hardware-backed security features whenever possible.

To proactively monitor your organization's external attack surface and catch exposures before attackers do, try Jsmon.